I got to thinking the other day that when I capture lunar images with my 450D they’re pretty much monochrome. I know there is a tiny amount of colour there, but you really do have to drag it out. If the colour components arriving at any given photosite on the camera sensor are pretty much the same, I thought, then it might be possible to treat the camera as a large monochrome sensor and not have all the complexity of combining colour values from the Bayer mask. So, I thought I’d give it a go. The nice thing is that it’s possible to work from the same set of image data so it should be a good test.

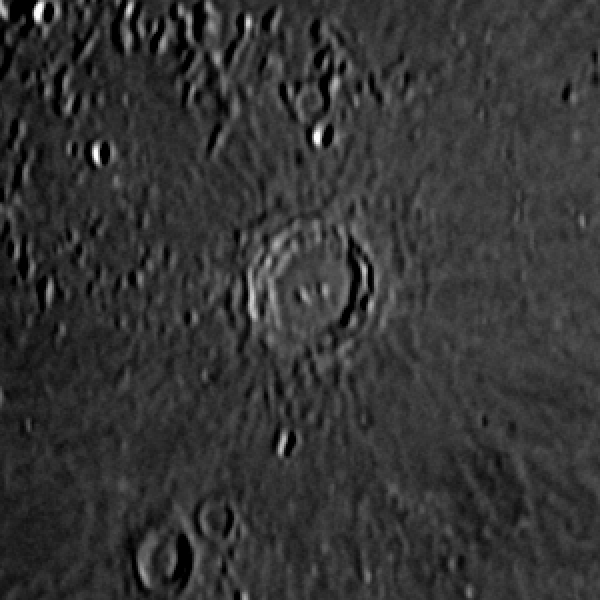

First up, here’s an image of the Moon done the way I’d normally do it, converting the colour image to monochrome before stacking.

(This image should link to a larger one.)

And now, the same RAW data files, but with the individual pixel levels treated as monochrome colour values and with no combining pixels as happens with the RAW to RGB conversion. And finally stacked as for the previous image.

(Again, the image links to a larger version.)

I’m not sure there’s a lot in it, but I think the second probably is the better image. To my eye I think the contrast is controlled a little better.

I thought it might be useful to compare a small area at a scale where it’s possible to see the individual pixels, so these two are the debayered monochrome image first, and the monochrome “direct from RAW” image second:

I think these support my view that the contrast is better-controlled in the second version and perhaps the image is even a little sharper, but unfortunately there’s also far more noise apparent too. I suspect that isn’t visible in the debayered image because the process of combining the pixel values acts to smooth out small variations in neighbouring pixels. I think more experimentation is required. Perhaps stacking more images would help get that noise under control.

I think these support my view that the contrast is better-controlled in the second version and perhaps the image is even a little sharper, but unfortunately there’s also far more noise apparent too. I suspect that isn’t visible in the debayered image because the process of combining the pixel values acts to smooth out small variations in neighbouring pixels. I think more experimentation is required. Perhaps stacking more images would help get that noise under control.

Hi there

This looks like a very logical think to do. Could I trouble you to let me know your steps? I assume you captured in RAW in your camera, but in Photoshop. Are there steps performed in Camera Raw Filter, or elsewhere?

Any assistance much appreciated!

Ivan